How to Pass a Competency Based Interview for MDB Roles

You’ve got the interview invite open, the job description in another tab, and a growing suspicion that generic advice won’t get you through an MDB panel.

That instinct is correct.

If you’re interviewing with the World Bank, IMF, ADB, AfDB, IFC, or a similar institution, you’re walking into a process designed to test evidence, judgment, and credibility under pressure. Your resume got you in the room. Your competency answers decide whether you stay in the process.

Most candidates prepare the wrong way. They read a list of common questions, jot down a few STAR stories, and hope they can improvise. That approach breaks fast in MDB interviews because the panel is listening for much more than a neat anecdote. They want proof that you can operate in complex institutions, influence stakeholders with competing agendas, and deliver results that matter in a development context.

If you want to know how to pass a competency based interview, treat it like a professional exercise in evidence, not a personality test. That shift changes everything.

Why Competency Interviews Are the Gateway to MDB Careers

You join the panel call. One interviewer asks about stakeholder management in a fragile operating context. A second follows with a question on delivery under tight deadlines. A third asks what you would do differently. In an MDB interview, that sequence is deliberate. The panel is testing whether your judgment holds up from more than one angle.

That format exists because these institutions need a hiring method with stronger predictive value than an informal conversation. Competency-based interviews outperform unstructured interviews in predicting job performance, and structured interviews reach a validity coefficient of 0.51 for experience-based questions while proving more than twice as effective as unstructured methods, according to this academic review of competency-based interviewing.

MDBs rely on this approach because the cost of a weak hire is high and visible. One poor appointment can slow project preparation, weaken client trust, create friction across matrixed teams, and damage execution in environments where political judgment and delivery discipline both matter. In many roles, the panel is hiring for work that sits close to lending, advisory, policy, supervision, or institutional reform. They cannot afford someone who interviews well but executes poorly.

What the panel is actually testing

The panel wants evidence of repeatable behavior, not polished self-description. That distinction matters.

They are listening for signs that you can:

Work across institutional lines: align country counterparts, internal reviewers, and external partners without losing pace

Handle ambiguity well: move a piece of work forward when instructions are incomplete, incentives conflict, or the context shifts

Deliver under scrutiny: produce analysis, recommendations, or operations that stand up to challenge from senior colleagues and clients

Use sound judgment: decide when to escalate, when to push back, and when a compromise protects the objective

Practical rule: Every answer must show behavior under pressure, not a résumé summary.

In MDB interviews, a good answer does more than recount a task. It shows how you assessed the situation, what constraints you faced, which trade-offs you made, and what happened because of your actions. That is one reason generic STAR preparation often falls short. A standard STAR answer can describe activity. A strong MDB answer has to show institutional judgment, development context, and how your result would stand up in a panel scoring discussion. If your example involves program delivery, policy implementation, or portfolio performance, it helps to understand how MDBs assess outcomes through results-based management in development programs.

Why this format favors prepared candidates

This interview format rewards candidates who prepare with discipline. The scoring is usually tied to defined competencies, and panel members often compare notes against the same evidence standard. Charisma helps less than candidates expect. Specificity helps more.

I have seen technically strong applicants lose marks because they answered at the level of responsibilities instead of decisions. I have also seen quieter candidates score well because they gave clear examples, named the constraint, explained the trade-off, and showed measurable or observable impact. That is the unwritten rule. The panel does not need your whole career story. It needs proof that you have already behaved in ways that fit the role.

A precise answer makes the panel’s job easier. In MDB hiring, that usually improves your score.

Decoding the Competency Framework of Top MDBs

A candidate walks into an MDB panel convinced they are ready because they know the sector, know the project cycle, and care about development impact. Twenty minutes later, they are struggling. The panel keeps returning to the same point in different language: how did you influence, decide, prioritize, and deliver under institutional constraints?

That is the actual competency framework.

MDB job descriptions often look generic on paper. Terms such as client orientation, drive for results, and collaborative leadership can sound interchangeable. In interview scoring, they are precise. Each one points to a set of behaviors the panel expects to hear, and panel members usually test the same competency from different angles to see whether your example still holds up.

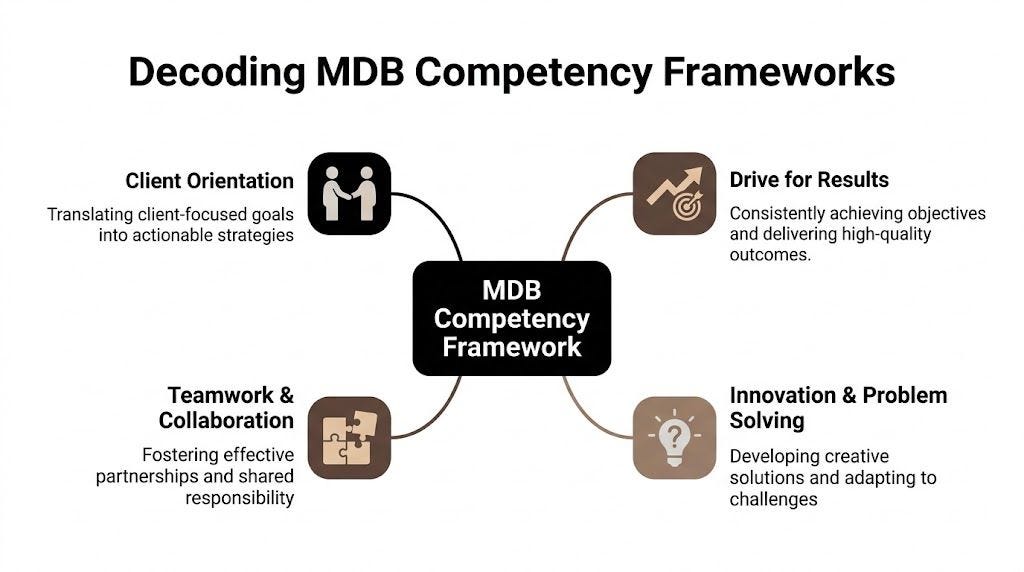

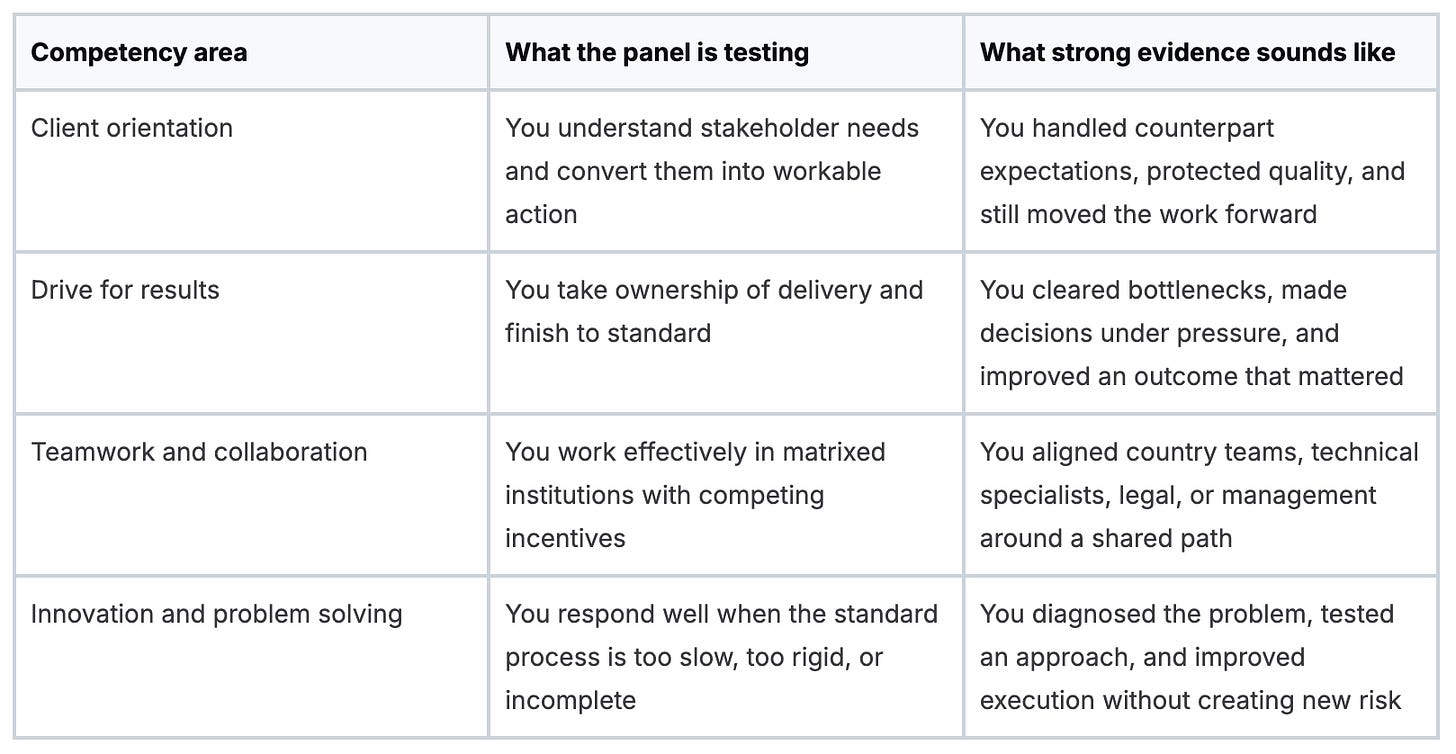

The four buckets behind most MDB competencies

Across major MDBs, the wording changes but the scoring logic is familiar. It usually clusters around four areas.

A strong answer usually touches more than one bucket. That is one of the unwritten rules in MDB interviews. The panel may ask about collaboration, but the example that scores well often also shows judgment, delivery discipline, and political awareness.

What these competencies mean in MDB interviews

Client orientation is rarely about being pleasant or responsive. In MDB settings, it often means handling pressure from government counterparts, donors, sponsors, or internal clients without promising what the institution cannot deliver. Good answers show judgment. You listened, clarified the need, managed expectations, and produced something useful.

Drive for results means disciplined execution in a system full of constraints. Panels listen for how you handled delays, weak inputs, clearance issues, shifting priorities, or fragile implementation capacity. They want to hear what you did when the work became difficult, not that you stayed committed to the mission.

Teamwork and collaboration carry extra weight in MDBs because the work is rarely owned by one person. A candidate may say, “I worked closely with colleagues across teams.” That sounds fine but scores weakly. A stronger answer names the tension. Perhaps the sector team wanted speed, legal wanted tighter language, and the country office was protecting the client relationship. Then it shows how you got alignment without formal authority.

Innovation and problem solving are also judged differently here than in the private sector. Panels are not asking whether you generated novel ideas for their own sake. They are asking whether you improved delivery, analysis, or decision quality in an environment with procedures, reputational risk, and public accountability.

Institutional nuance matters

The same label can point to different behaviors depending on the institution, business line, and grade level.

In a policy-heavy unit, collaboration may mean handling strong opinions and building agreement around evidence. In an investment arm, stakeholder management may mean balancing sponsors, credit, legal, environmental and social teams, and management comments. In country-facing operations, client orientation may mean patience, cultural fluency, and realism about what can be implemented on the ground.

Grade level matters too. At junior and mid levels, panels often test whether you can execute reliably, synthesize well, and build trust. At more senior levels, the bar shifts toward judgment, trade-offs, team leadership, and institutional influence. Candidates miss this all the time. They bring a technically solid example to a senior interview, then wonder why the panel keeps probing governance, stakeholder resistance, or political sensitivity.

Read the vacancy like a scoring sheet. Repeated verbs matter. So do nouns. If the role emphasizes implementation support, portfolio monitoring, reform dialogue, or operational quality, prepare examples that show how you improved delivery in a real institutional setting. If the posting refers to results frameworks or impact tracking, it helps to understand how MDB teams assess progress through results-based management in MDB operations.

When a panel asks about results, they usually want evidence that you got a difficult piece of work over the line without losing judgment or stakeholder support.

How to read the job description properly

Use a simple filter.

Underline the action verbs first: lead, coordinate, assess, advise, design, negotiate, monitor. Then mark the relationship demands: government counterparts, private sponsors, internal reviewers, cross-functional teams, development partners. After that, identify the pressure points: deadlines, ambiguity, reform resistance, portfolio complexity, fragile capacity, reputational sensitivity.

Finally, ask a harder question than candidates usually ask. Not “Have I done something similar?” Ask, “Which example proves I can do this in an MDB way?”

That last phrase matters. An MDB answer is not just a story about competence. It is a story about competence under procedure, scrutiny, and competing stakeholder interests. That is why generic STAR preparation often underperforms in these interviews, and why the stronger STAR-ER answers add reflection and relevance to the institution’s context.

How to Map Your Experience to MDB Competencies

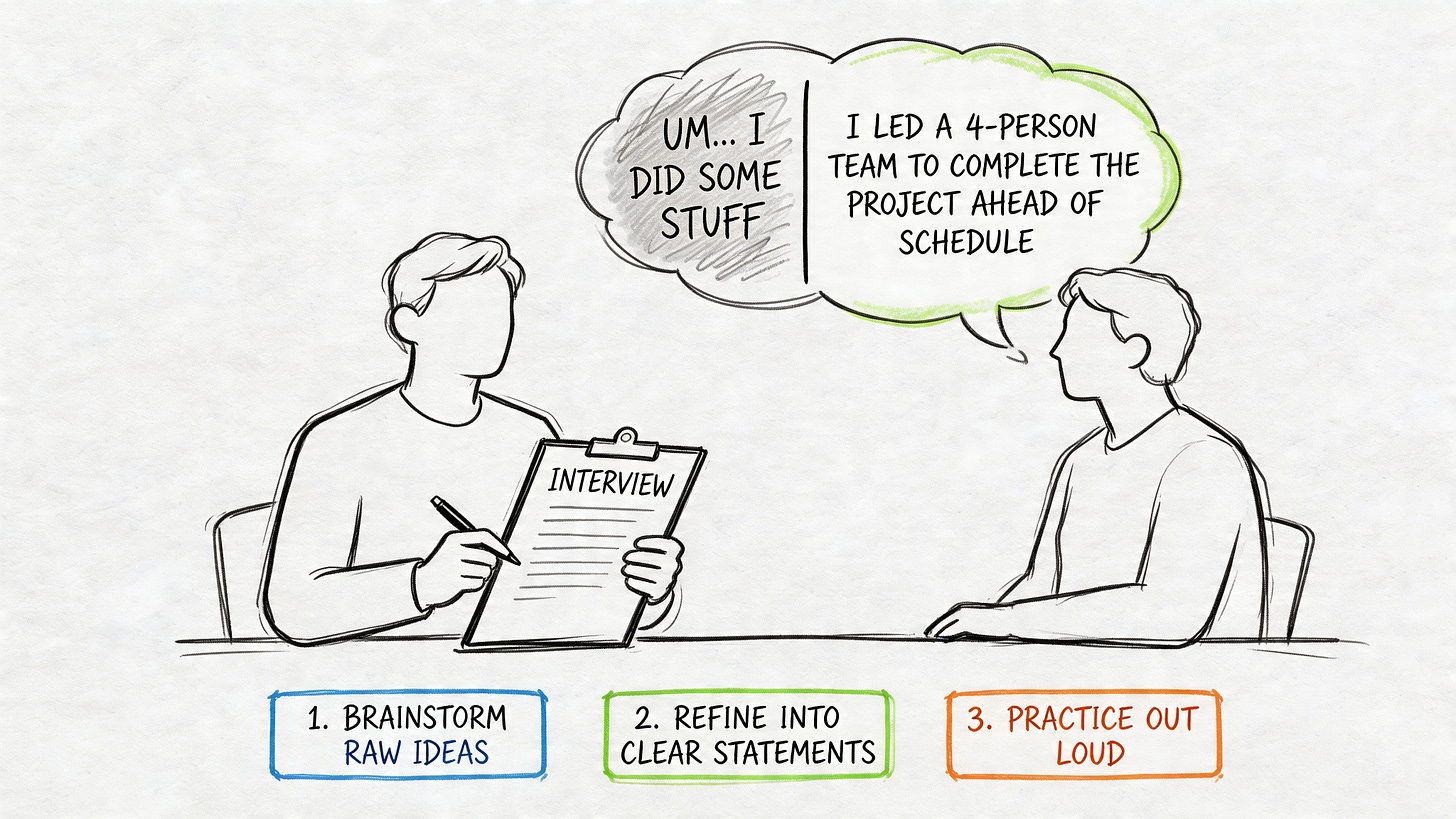

Candidates usually overprepare questions and underprepare evidence. That’s backwards.

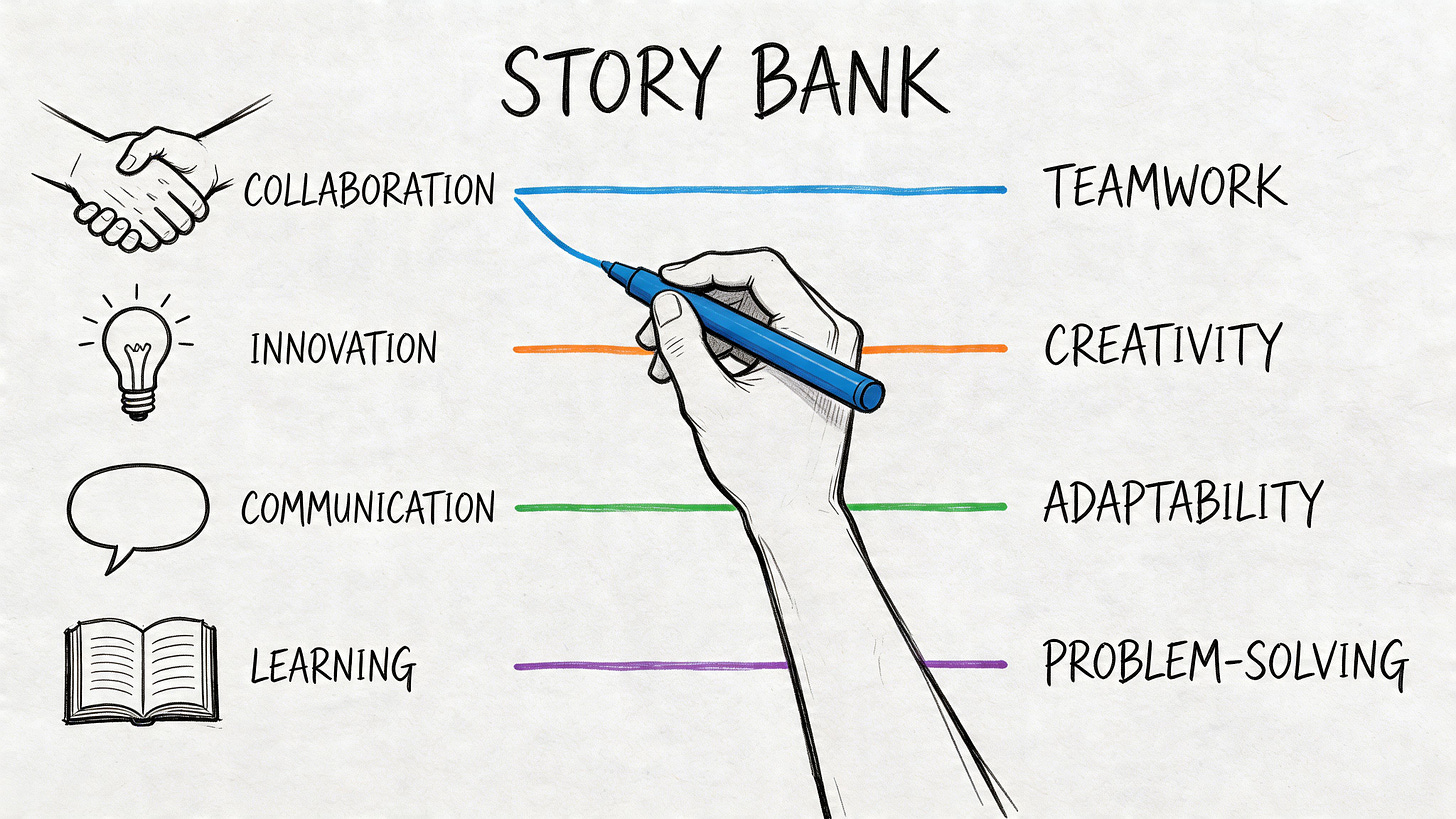

The fastest way to improve your interview performance is to build a story bank before you script anything. That means creating a shortlist of professional episodes you can adapt across multiple questions without sounding repetitive.

Start with raw material, not polished answers

Open a blank document and list your strongest professional episodes. Use projects, crises, negotiations, analytical assignments, team conflicts, turnarounds, launches, or stakeholder-heavy initiatives. Include consulting work, public sector assignments, NGO work, fellowships, and academic projects if they show the right behaviors.

Don’t judge the stories yet. Just capture them.

A strong story bank usually includes examples where you had to:

Influence without authority

Fix a problem under time pressure

Handle disagreement

Translate analysis into action

Coordinate across functions or institutions

Learn quickly in an unfamiliar setting

Tag each story with multiple competencies

One story should rarely serve only one purpose. A procurement reform example might show analytical thinking, stakeholder management, resilience, and results orientation. A mission preparation story might show planning, teamwork, judgment, and communication.

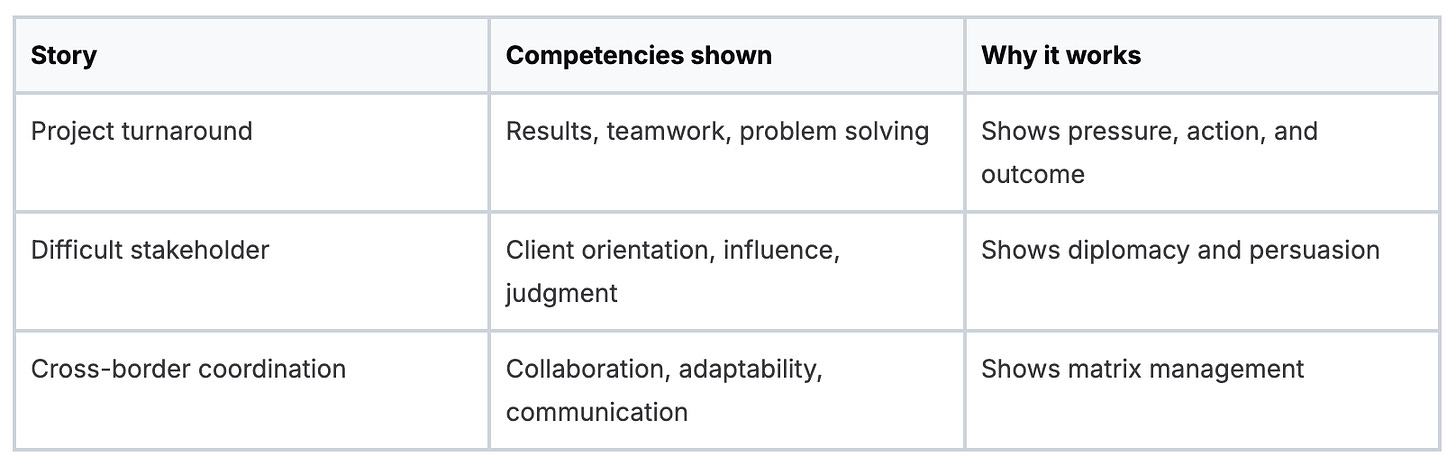

Build a simple matrix with three columns:

Candidates often discover they’ve been underselling their own experience.

Tighten the evidence

Once your list exists, stress-test every story. Ask:

What was the stakes-heavy moment?

What exactly was my role?

What decisions did I make personally?

What resistance did I face?

What changed because of my actions?

What did I learn that matters for an MDB role?

If a story has no tension, no decision, or no outcome, drop it.

For analytical roles especially, candidates often need better examples of how they moved from diagnosis to action. If that’s a weak spot, this guide on how to improve analytical skills for international development roles can help you sharpen the way you present evidence, not just the analysis itself.

Good preparation gives you range. Great preparation gives you reusable stories with different angles.

Build your final interview inventory

By the end of this exercise, you want a compact set of stories you know cold. Each one should be adaptable. Each one should have a clear role, action, and result. Each one should connect to the competencies in the vacancy.

That’s the foundation. Without it, you’ll improvise. With it, you’ll answer with control.

Mastering the STAR-ER Method for MDB Interviews

You are three minutes into a panel interview at an MDB. The first interviewer asks for a time you influenced a resistant counterpart. You give the background, explain the politics, mention the team’s effort, and reach your conclusion just as the panel chair says, “What exactly did you do?”

That is where many otherwise strong candidates lose control of the interview.

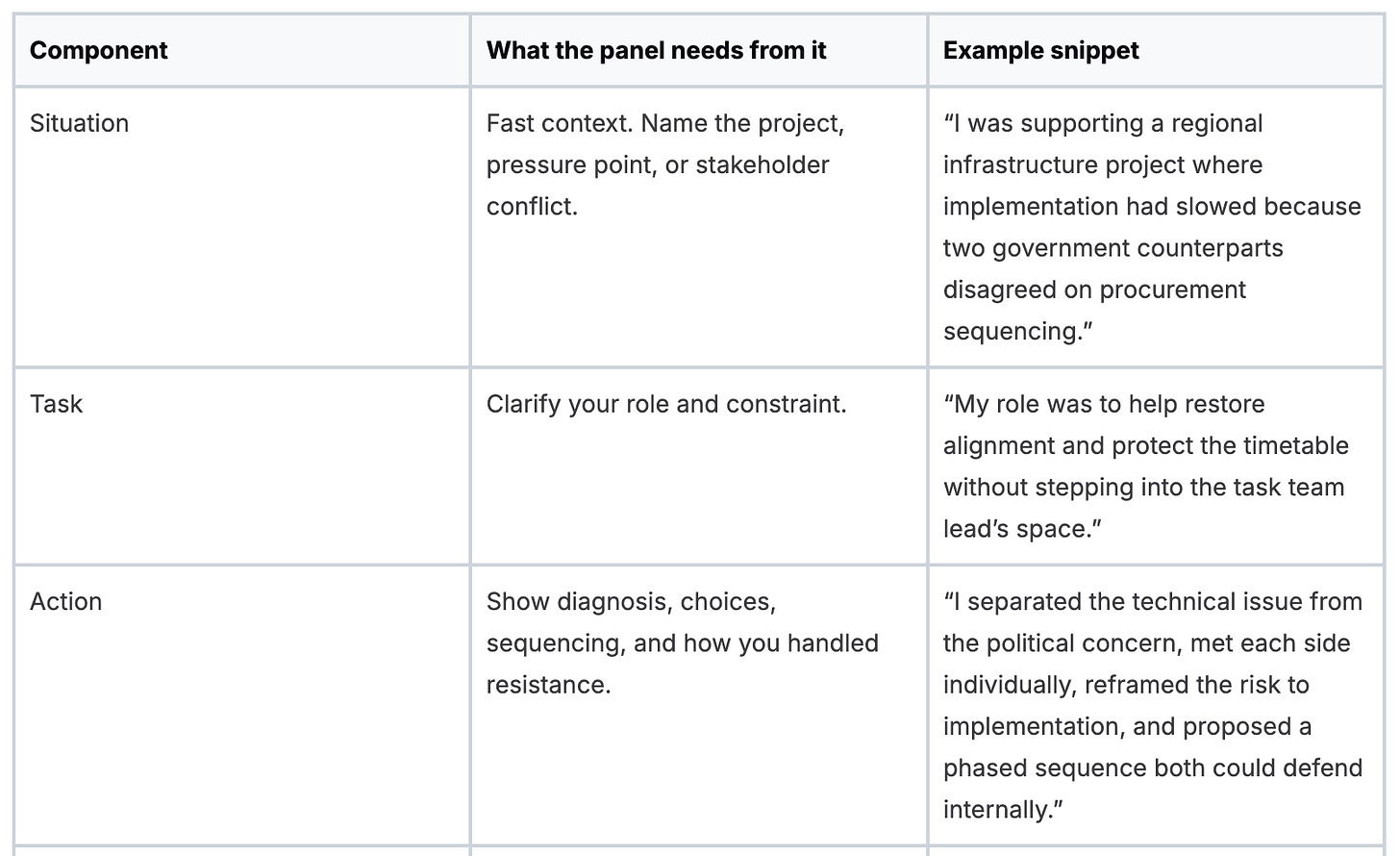

STAR is still useful, but standard STAR is often too thin for MDB panels. These interviews do not only test whether you can tell a coherent story. They test whether you can show judgment, institutional awareness, and the ability to learn in politically sensitive, multi-stakeholder environments. That is why I coach candidates to use STAR-ER:

Situation

Task

Action

Result

Evaluation

Reflection

The extra two steps matter. In MDB hiring, a good outcome is not enough. Panelists also want to hear why your approach worked, what trade-off you managed, and what you would adjust next time.

Use STAR-ER with proportion

Candidates usually fail in one of three ways. They over-explain the context, hide behind “we,” or stop at the result. All three weaken your score because competency panels are marking observed behavior, not general professionalism.

A disciplined answer gives the panel enough context to follow the story, then spends most of the time on your decisions and execution.

Use this rough weighting when you speak:

Situation: 10 to 15 percent

Task: 10 percent

Action: 45 to 50 percent

Result: 15 to 20 percent

Evaluation and Reflection: 10 to 15 percent combined

This is a speaking framework, not a script. If the case was politically messy, you may need a little more context. If the competency is about delivery under pressure, the action and result should dominate. The point is control.

Why MDB panels respond well to STAR-ER

A private sector panel may be satisfied with a clean win. MDB panels usually probe further. They want to know how you handled competing mandates, how you worked across internal and external stakeholders, and whether you can operate without creating avoidable friction.

That changes the standard advice.

A textbook STAR answer often sounds polished but generic. A strong MDB answer sounds specific, institutional, and sober. It shows that you understand the difference between solving a problem and solving it in a way that others can live with.

What each part needs to do

Situation and Task

Keep these tight.

The panel does not need a full history of the program, the donor environment, and every stakeholder involved. It needs enough information to understand the stakes, your mandate, and the constraint you were working under.

Weak version: “We had a very complex program with many stakeholders, and over time several issues came up.”

Stronger version: “I was supporting a policy reform workstream that had stalled because the ministry and donor group wanted different sequencing.”

The second version does three things quickly. It sets the scene, identifies tension, and gives the panel a reason to listen for your intervention.

Action

This is the centre of the answer.

For MDB interviews, good action sections usually include four elements:

Diagnosis. What did you work out that others had missed or blurred?

Decision. What approach did you choose, and why that one?

Execution. What did you do, step by step?

Adjustment. What changed when resistance appeared or facts shifted?

This is also where many candidates damage good stories by speaking in team language. MDB work is collaborative, but competency interviews still assess individual contribution. Use “we” only when the distinction matters, then return to “I” to show ownership of your actions.

Strong action language sounds operational. Use concrete verbs. Assessed. Prioritised. Escalated. Reframed. Negotiated. Drafted. Sequenced. Pressed for decision. Adjusted the approach.

A high-scoring answer is built from decisions.

Result

Results need substance. “It went well” is not substance.

State what changed, why it mattered, and how it connected to the institution’s objective. For MDB roles, that usually means one of a few things: a blockage cleared, a decision moved forward, a counterpart re-engaged, internal alignment improved, implementation risk dropped, or a deliverable became usable by decision-makers.

Use metrics if you have them and if they are yours to cite. If you do not, qualitative outcomes are fine. Just make them precise.

For example:

“The revised note secured director-level clearance on the first review.”

“The client accepted the sequencing change, which allowed procurement to proceed without reopening the financing discussion.”

“The workshop shifted the discussion from positions to options, and we got agreement on the next two milestones.”

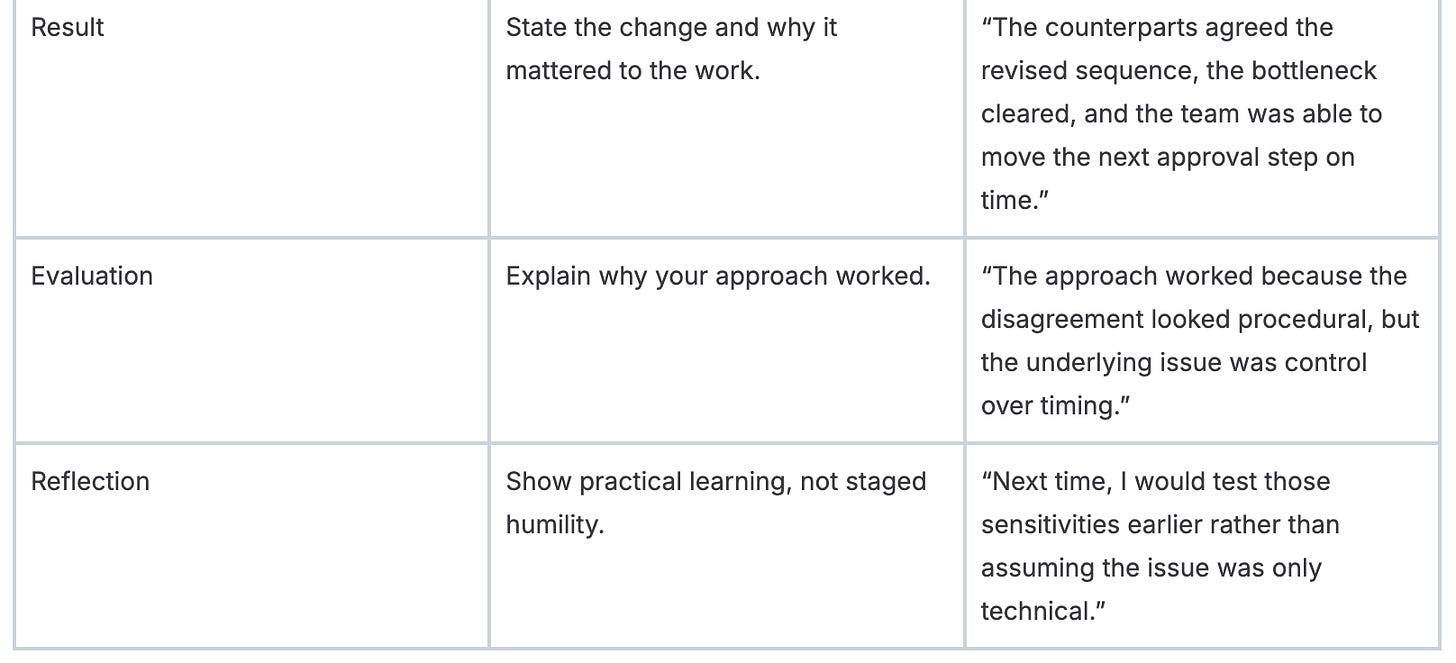

Evaluation and Reflection

Here, STAR becomes STAR-ER, and experienced candidates pull ahead.

Evaluation explains the mechanism. Why did your approach work? Did you reduce defensiveness by changing the process? Did you gain trust by consulting earlier? Did you avoid escalation by narrowing the decision?

Reflection shows that you can improve your own practice. Good reflection is specific and unsentimental. It should sound like a professional who has learned something useful, not a candidate trying to perform self-awareness.

Good examples:

“I should have brought legal in earlier, because the delay later looked substantive when it was really a clearance issue.”

“I would shorten the first draft cycle next time. The team needed a decision memo, not a long technical note.”

“I learned that resistance from a counterpart is often a signal about process or timing, not disagreement with the substance.”

That is the level MDB panels respect.

A full model answer

Take the question: Tell me about a time you had to influence a difficult stakeholder.

A weak answer sounds like this: “I worked with a difficult counterpart, listened carefully, communicated well, and eventually got buy-in.”

A stronger STAR-ER answer sounds like this:

In a regional development project, I was coordinating input for a reform milestone that required sign-off from a counterpart who had become resistant after earlier delays. My task was to keep the work moving while rebuilding trust and avoiding a public escalation.

I reviewed the prior exchanges and saw that the resistance was not mainly about the technical content. The counterpart felt they were being asked to react too late to a near-final product. I changed the process. I set up a short call to clarify the specific points of concern, then sent a revised draft that showed exactly where their input would shape the recommendation. I also narrowed the decision points so they were not reacting to an overloaded document. Before the next discussion, I aligned internally with colleagues so we presented one coherent position.

The stakeholder re-engaged, gave substantive comments, and we finalised the workstream without further delay. The most important result was that the process became constructive again, which mattered because we needed continued cooperation beyond that milestone.

What worked was treating the resistance as a process problem, not a personality problem. I learned that difficult stakeholders often respond once they are consulted early and clearly. Next time, I would involve them in framing the decision before a full draft exists.

That answer works because it fits the way MDB panels listen. It shows diagnosis, judgment, execution, institutional awareness, and learning. It also gives different panel members something to score. One hears stakeholder management. Another hears delivery discipline. A third hears reflection and maturity.

That is the standard to aim for.

Your Mock Interview and Practice Regimen

Preparation on paper is not enough. You need spoken reps.

A good answer in your notes can fall apart when you’re interrupted, challenged, or asked to be more specific. That’s why practice has to simulate pressure, not just review content.

Run mocks that feel uncomfortable

The best mock interviews are slightly annoying. The other person should interrupt, ask follow-ups, and press for clarity. If the mock feels smooth and supportive, it’s probably too easy.

Tell your practice partner to probe in ways real panelists do:

“What exactly did you do?”

“How did you decide that?”

“What resistance did you face?”

“What was the outcome?”

“What would you do differently?”

That pressure exposes weak stories fast.

Record yourself and review like an assessor

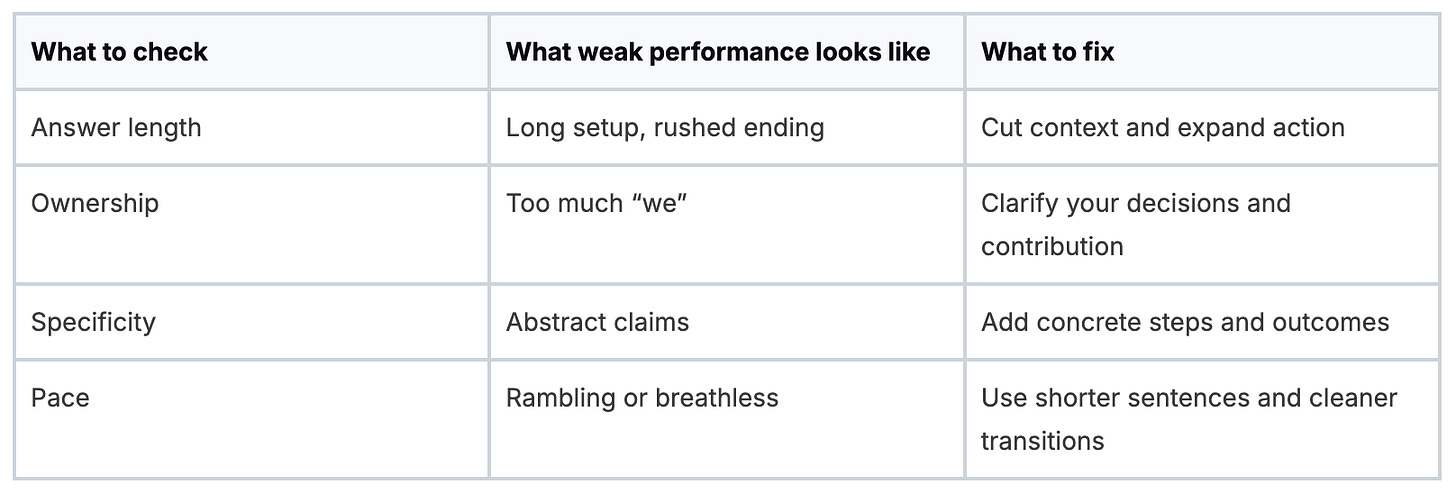

Record at least some of your practice sessions. Don’t watch for appearance. Watch for structure.

Review for these issues:

Build a repeatable routine

A solid regimen looks like this:

Story rehearsal: speak each core story out loud until the sequence is stable

Question drills: answer unfamiliar prompts using your existing story bank

Follow-up drills: practice defending the decisions in your examples

Panel simulation: have more than one person ask questions in sequence

One useful option for role-specific preparation is to review vacancy analyses and interview guidance from specialist publications. Multilateral Development Bank Jobs publishes MDB and consultant listings plus career guides, which can help you pressure-test whether your examples match the kinds of roles you’re targeting.

If your answer only works when delivered from memory, it’s too fragile for a real panel.

Practice until you sound natural

There’s a balance here. You want command, not recitation.

Good practice makes your answers easier to adapt. Bad practice makes them rigid. The fix is simple. Don’t memorize exact wording. Memorize structure, evidence, and the turning points in each story. Then say it a little differently each time.

That’s how you stay polished without sounding rehearsed.

Navigating Panel Dynamics and Common Interview Traps

The panel chair asks about stakeholder management. A technical specialist is scanning your evidence. HR is watching how you structure pressure. You answer the question you prepared, not the one they asked. That is how strong candidates lose points in MDB interviews.

Generic interview advice assumes a one-to-one conversation. MDB panels do not work that way. Each member is testing a different risk. Can you operate across cultures? Can you defend judgment under scrutiny? Will you represent the institution well with governments, donors, and internal counterparts? Panels score behaviors, so your examples need substance, judgment, and control.

This is also where standard STAR advice falls short. In MDB interviews, a tidy story is not enough. A panel wants to hear what you did, why you chose that route, what changed, and what you learned that would make you more effective in the role. That is why STAR-ER works better here. It gives the panel evidence of execution and reflection, which matters in institutions that value judgment as much as delivery.

Speak to the panel, not to your rehearsal

Robotic answers fail because they sound detached from the question. Panelists hear the template before they hear the substance.

Apply an underlying structure. Open with a direct answer. Give only the context needed to understand the problem. Spend the bulk of your time on your actions, your judgment, and your result. Then add the extra layer from STAR-ER: explain what you would repeat, what you would change, or what the experience taught you about working in a complex institution.

That final reflection often separates polished candidates from appointable ones.

Manage attention across the room

A panel interview is partly an answering exercise and partly a room-reading exercise. Start with the person who asked the question. Then bring the rest of the panel in as you explain the action and result. End by returning to the questioner. It sounds simple, but it gives your answer shape and shows composure.

Use these rules:

Answer the asked question first: do not force a pre-rehearsed example if the competency is different

Share eye contact across the panel: one person may lead the question, but all of them are scoring

Keep your pace measured: fast answers sound defensive, especially on difficult examples

Pause before your result and reflection: it signals that you know where the point of the story is

Virtual panels need even more discipline. Look into the camera when making your main point. Keep notes off-screen or on a single printed page. If audio overlaps, let the panel finish and then respond calmly. Small handling choices shape how credible you appear.

The traps that catch good candidates

The first trap is answering at the wrong altitude. Some candidates stay too strategic and never show behavior. Others drown the panel in operational detail and hide the judgment. MDB panels usually want both. Give enough context to show complexity, then explain the specific choices you made inside it.

The second trap is shared ownership without individual accountability. Panel members know MDB work is collaborative. They still need to isolate your contribution.

Weak answer: “We worked with the ministry and aligned the team around a revised approach.”

Stronger answer: “I identified the point of disagreement, reset the consultation sequence, and drafted the compromise option that the country office and ministry accepted.”

The third trap is a result with no evidence of learning. For MDB roles, especially at mid and senior levels, outcomes matter but reflection also matters. A candidate who can say, “The project moved forward, but I would escalate the procurement risk earlier next time,” sounds more mature than one who presents every example as a clean success.

The fourth trap is getting rattled by panel behavior. One person may interrupt. Another may stare at their notes. A third may look unconvinced. Do not overread it. Many panelists are writing against score sheets, not reacting to you personally. If you want a clearer view from the other side of the table, read this interview with an MDB panellist on what gets noticed in interviews.

Recover cleanly if an answer goes off track

Recovery matters. I have seen candidates save an interview by correcting themselves early instead of defending a weak answer.

Use a simple reset line:

“Let me answer that more directly. My role was to resolve the impasse, and the result was a revised implementation plan approved within two weeks.”

That works because it shows control. It also helps the panel score you on the competency instead of trying to decode a messy example.

One final rule. Do not treat every panel member the same. If the technical expert follows up, go deeper on method or analysis. If HR follows up, clarify behavior, influence, or conflict handling. If the chair follows up, connect your example back to institutional judgment and delivery. The best candidates adjust in real time without sounding different to each person.

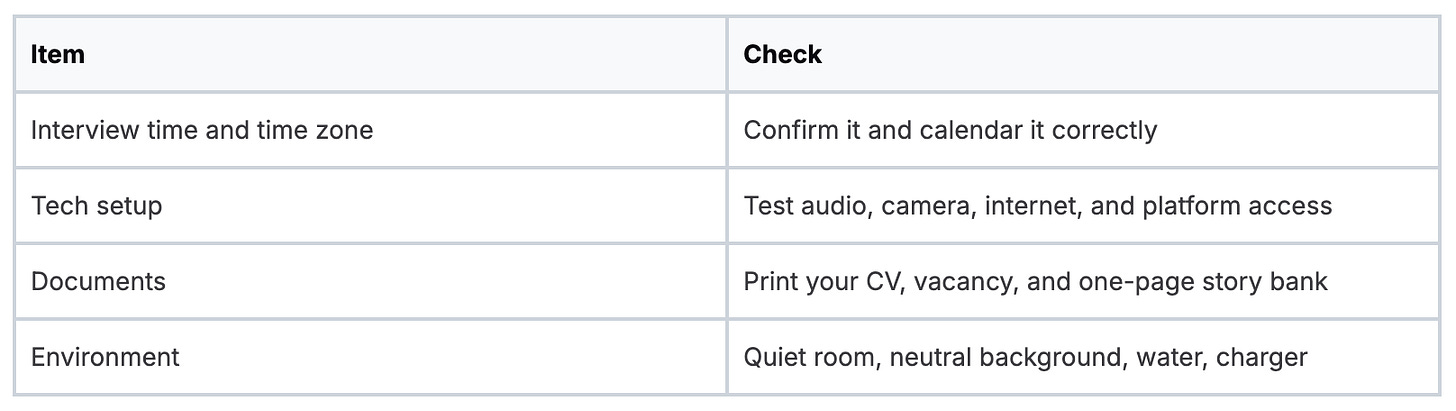

Your Final Pre-Interview Checklist

The last stretch is for sharpening, not cramming. If you’re trying to invent new stories the night before, you started too late.

Use the final day to lock in execution.

What to review

Core stories: rehearse your strongest examples until you can deliver them cleanly without sounding scripted

Competency match: make sure each major requirement in the vacancy has at least one story behind it

Opening and closing: know how you’ll introduce yourself and how you’ll answer “why this role”

Questions for the panel: prepare thoughtful questions about team priorities, delivery challenges, or role expectations

What to prepare physically

What to avoid

Last-minute rewrites: they make your answers less stable

Overloaded notes: they pull your attention off the panel

Generic questions: avoid asking things already obvious from the website or vacancy

Forced perfection: a composed answer beats a memorized one

Walk in ready to do three things well. Answer the actual question. Show your role clearly. End with a real result and a thoughtful reflection.

That’s how strong candidates separate themselves.

If you’re targeting roles at the World Bank, IMF, ADB, AfDB, AIIB, or related institutions, Multilateral Development Bank Jobs is a practical way to track openings and build a more focused application strategy. The publication shares full-time MDB vacancies, consultant opportunities, and career guides that can help you prepare for the recruitment process with more precision.